Architecting High Volume Audit Logging Systems

Design principles for building reliable and scalable audit pipelines

A deep exploration of how to architect high volume, tamper evident audit logging systems capable of supporting enterprise scale workloads.

Architecting High Volume Audit Logging Systems

Audit logging is no longer a background task performed by low volume applications. Modern systems generate millions or billions of events each month. SaaS platforms, distributed architectures, and global user bases produce a constant stream of actions that need to be captured, validated, stored, and secured.

A high volume audit logging system must deliver consistent performance, integrity, and reliability, even during peak load events. It must scale with business growth, maintain cryptographic guarantees, and ensure that every event is captured accurately. This article examines the principles of designing such systems, the challenges at scale, and architectural patterns that support dependable audit pipelines.

The Unique Requirements of High Volume Audit Logging

Audit logging differs from traditional application logging in several ways. High volume audit systems must meet requirements that are often more stringent.

Guaranteed capture of every event

Audit trails cannot accept data loss. An event that is not recorded creates a gap in the historical record, which may:

- compromise investigations

- violate compliance requirements

- obscure evidence of malicious activity

- undermine trust in the system

Systems must ensure that every event is captured and persisted even during network failures or burst traffic.

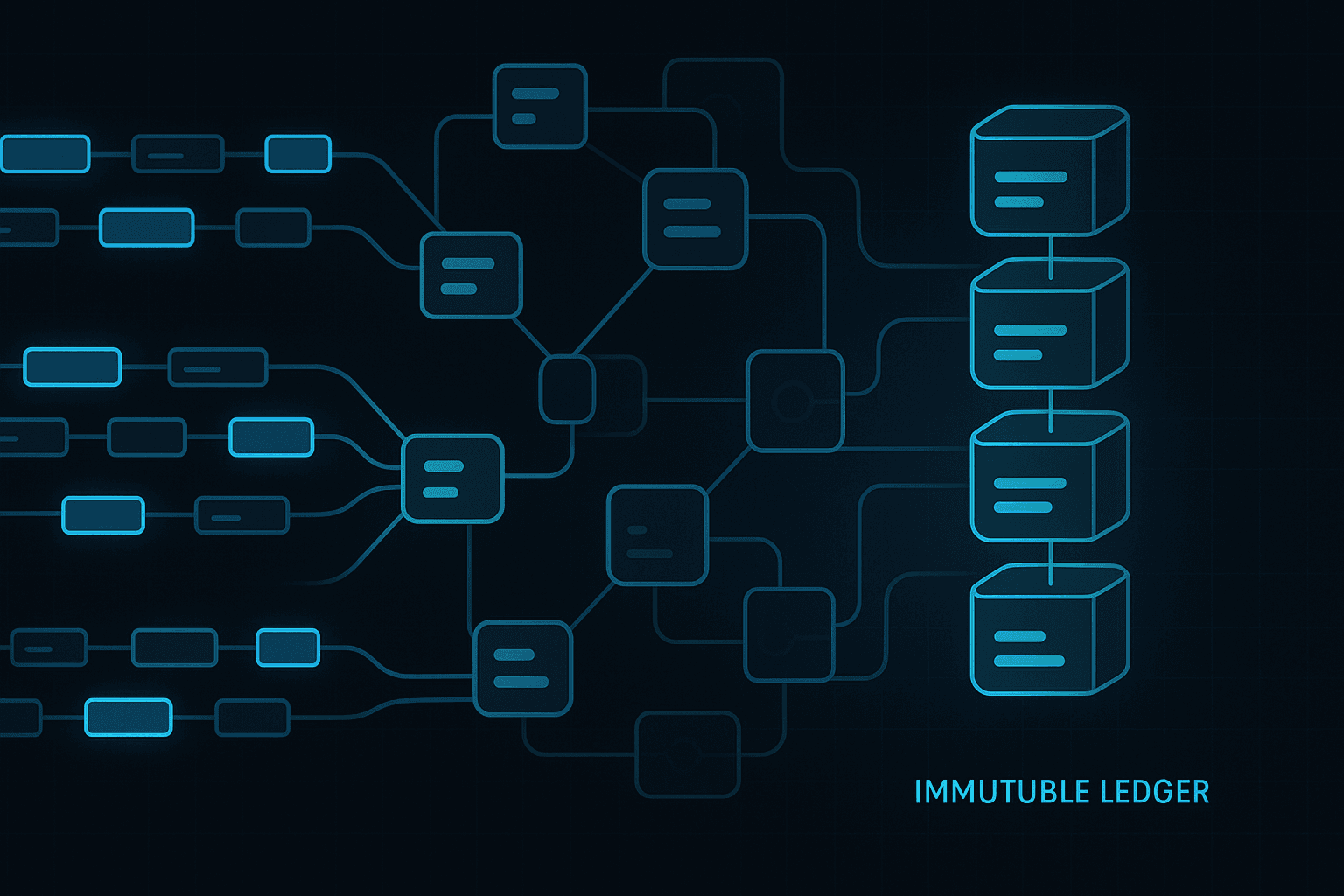

Immutability and tamper evidence

Audit logs must be immutable. High volume systems need to maintain cryptographic integrity across millions of records without sacrificing performance. This requires efficient hash chain management, append only structures, and secure storage.

Low latency ingestion

User interfaces and backend processes often rely on immediate confirmation that actions have been logged. High ingestion latency can break workflows or create uncertainty.

Efficient querying for investigations

Once stored, audit records must be searchable. Investigations often require filtering by:

- actor

- resource

- event type

- time window

- project or tenant

High volume systems must support fast queries across large datasets.

Long term retention and durability

Audit trails may need to be retained for years. This requires cold storage strategies, archiving, and efficient retrieval of historical data.

Challenges in High Volume Audit Logging

Burst traffic and uneven loads

User behaviour is unpredictable. Systems may experience sudden bursts of audit events during:

- peak business hours

- batch operations

- permission changes

- automated processes

- mass imports or exports

Architectures must handle sudden spikes without losing events.

Distributed system complexity

Microservices generate audit events from many locations. Ensuring consistent formatting, ordering, and hashing across services adds architectural complexity.

Storage explosion

Audit events accumulate over time. Even small systems generate large datasets. Storing, indexing, and retrieving large volumes requires careful planning.

Ensuring consistent hashing

Hash chains depend on precise ordering and deterministic events. In a distributed environment, maintaining consistent chain state is non trivial.

Architectural Patterns for High Volume Audit Pipelines

Event gateways

A central event gateway acts as the entry point for all audit logs. It:

- validates inputs

- ensures schema compliance

- enriches metadata

- rate limits if necessary

- authenticates API keys

This centralisation reduces inconsistencies.

Append only log stores

Append only structures are ideal for audit trails. They support:

- fast writes

- natural ordering

- immutability

- efficient hashing

Examples include specialised ledger stores or write optimised databases.

Stream processing

Audit events can be processed using event streams to handle high throughput. Technologies include:

- Kafka

- Kinesis

- Pulsar

Streams allow buffering, ordering, and parallel processing.

Dual write strategies for resilience

Systems may write to both:

- a high speed ingestion pipeline

- a durable ledger store

If one fails, the other ensures no data is lost.

Hash chaining at scale

Large scale hash chaining requires:

- batched hashing

- periodic checkpoints

- parallel hashing

- deterministic ordering

This approach balances performance with cryptographic integrity.

Hierarchical storage tiers

Storage can be divided into:

- hot storage for recent events

- warm storage for near term history

- cold storage for long term archives

This balances cost and performance.

Designing Event Schemas for Scale

A scalable audit system requires a consistent and well defined event schema.

Core attributes

Every event should contain:

- actor

- action

- target

- timestamp

- context

- result

- eventId

Normalisation

Consistent naming, data types, and formats prevent downstream confusion.

Schema evolution

Audit schemas must evolve without breaking historical data.

Partitioning and Indexing Strategies

Large scale systems require efficient partitioning. Common strategies include partitioning by:

- tenant

- event type

- time window

- resource

This allows targeted queries that avoid scanning the entire dataset.

Indexing considerations

Indexes should be created on:

- timestamp

- actor

- resource

- project

- tenant

Careful index selection prevents performance degradation.

Ensuring Durability and Fault Tolerance

Replication

Data should be replicated across multiple availability zones or regions.

Write ahead logging

Write ahead logs ensure durability before events are fully processed.

Backpressure handling

Systems must handle overload gracefully, using queues and buffers.

Dead letter queues

Invalid events should be rerouted for manual review.

Security and Integrity

Encryption

Use encryption for both data at rest and data in transit.

Role based access

Analysts and systems should have minimal access to the audit store.

Tamper evidence

Cryptographic integrity checks should be applied regularly.

Operating a High Volume Audit Logging System

Monitoring

Track:

- ingestion rate

- pipeline latency

- dropped events

- error rates

- storage utilisation

Alerting

Alerts should trigger for unusual event volumes or ingestion failures.

Capacity planning

Projection tools should estimate growth based on current volumes.

Backup and disaster recovery

Frequent backups are required to prevent historical loss.

Conclusion

Designing a high volume audit logging system is a complex engineering challenge. Such systems need to handle high throughput, guarantee event capture, support fast querying, and maintain cryptographic integrity at all times.

By using event gateways, append only stores, streaming pipelines, scalable hashing strategies, and multi tier storage, organisations can build systems that support millions of audit events reliably. These systems form the foundation for compliance, security investigations, and operational transparency at scale.

A well designed audit pipeline not only captures data but preserves trust in the accuracy and completeness of that data. As organisations grow, the importance of scalable audit logging continues to increase, and only architectures designed with these principles in mind will remain reliable under pressure.